Edge Platform Standard

The Helix line becomes the enterprise edge foundation.

SurgeXi is standardizing around the Helix node family as the primary enterprise edge hardware path, with smaller and larger supporting node classes where the environment needs a different fit.

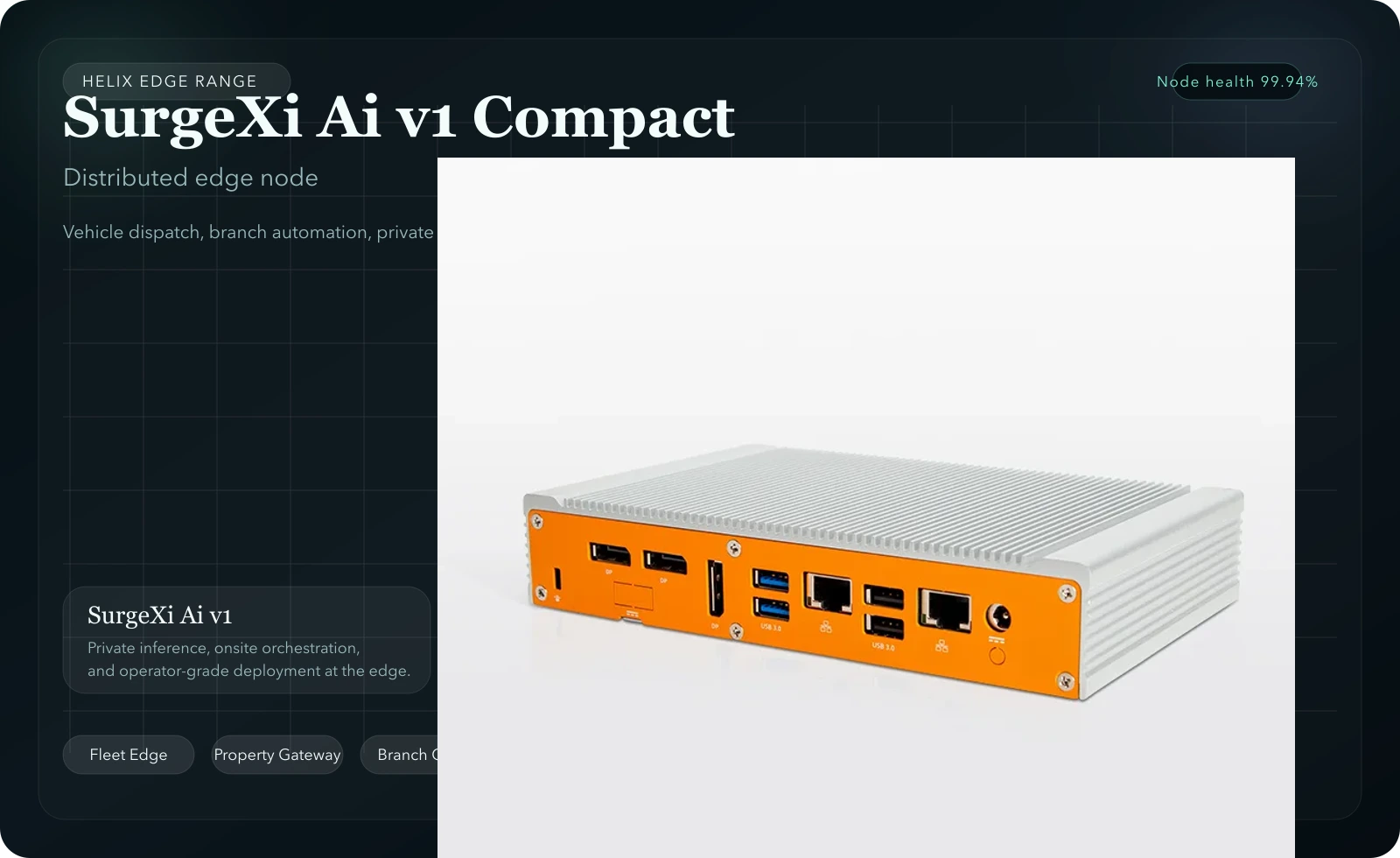

Put private inference and site automation closer to the building, home, or occupancy signals being monitored.

Run local orchestration, dispatch support, and intake intelligence where crews and vehicles are operating in real time.

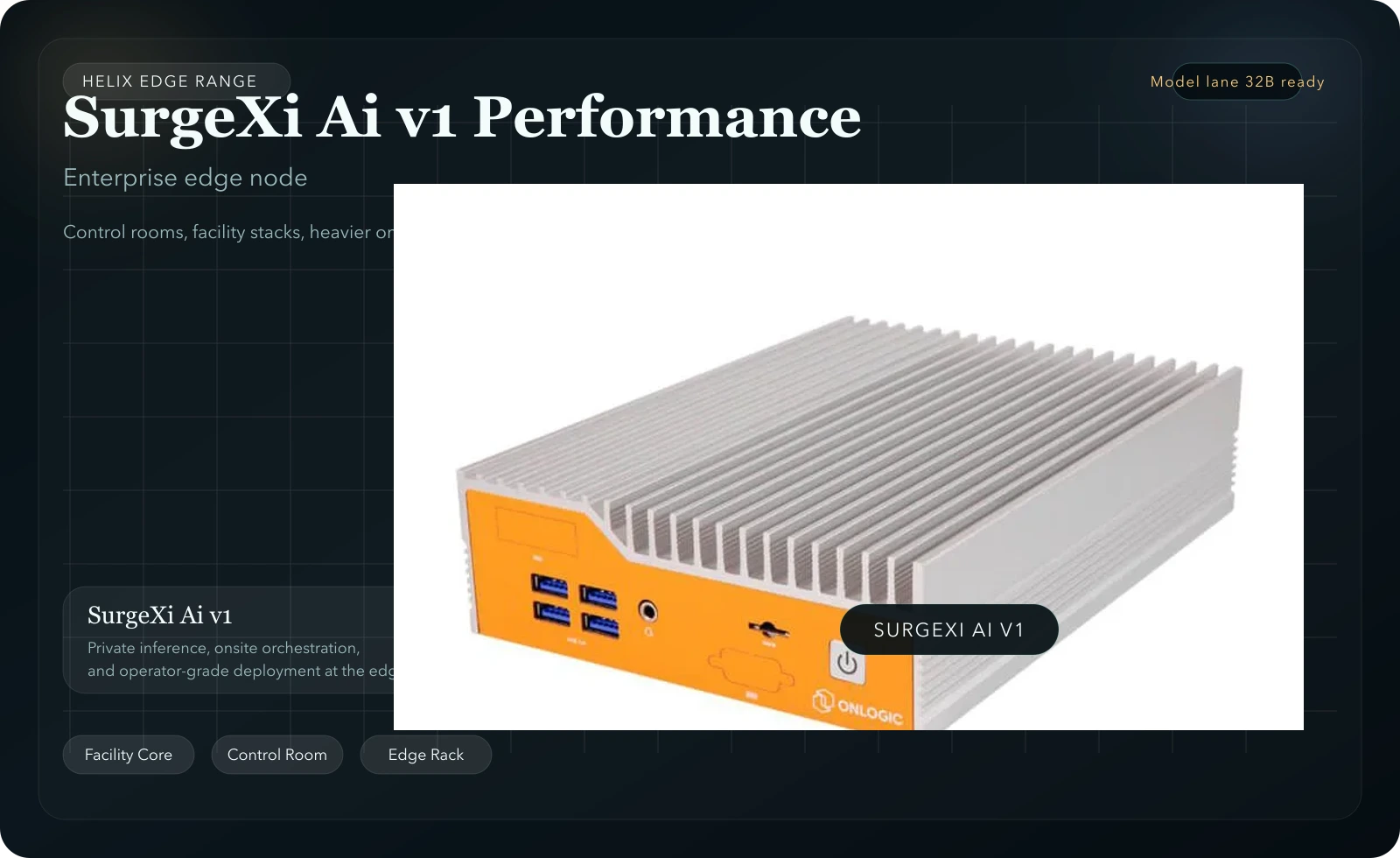

Use higher-performance nodes in control rooms and operational hubs to anchor heavier analytics and orchestration workloads.